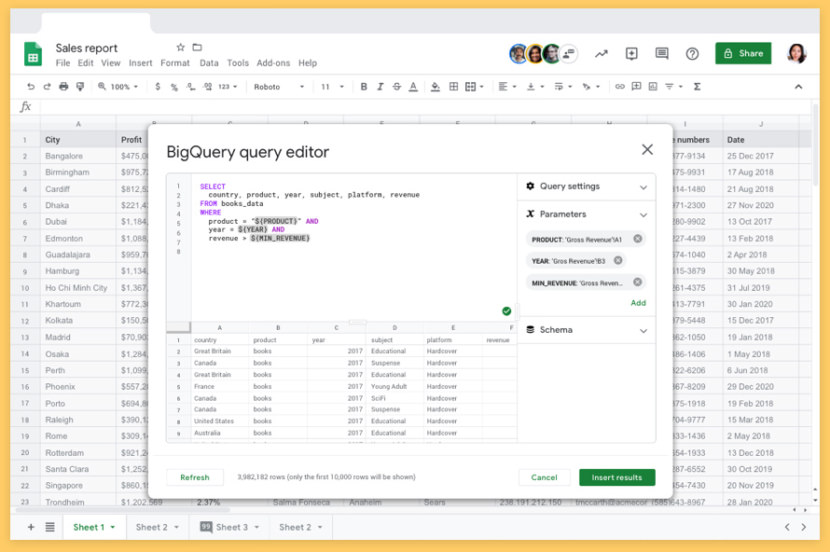

Those can be split across any number of BigQuery projects and datasets, but ultimately you'll need one table per CSV type. IE: If you have 4 different kinds of CSV files, then you'll want 4 different tables.

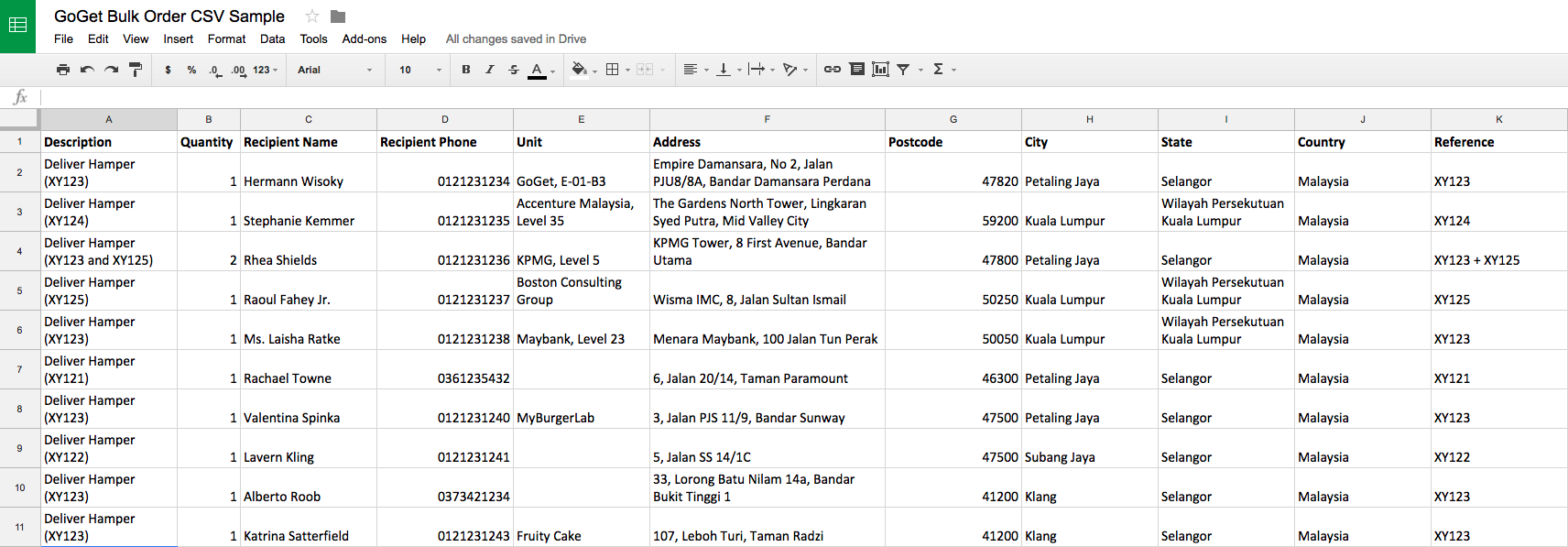

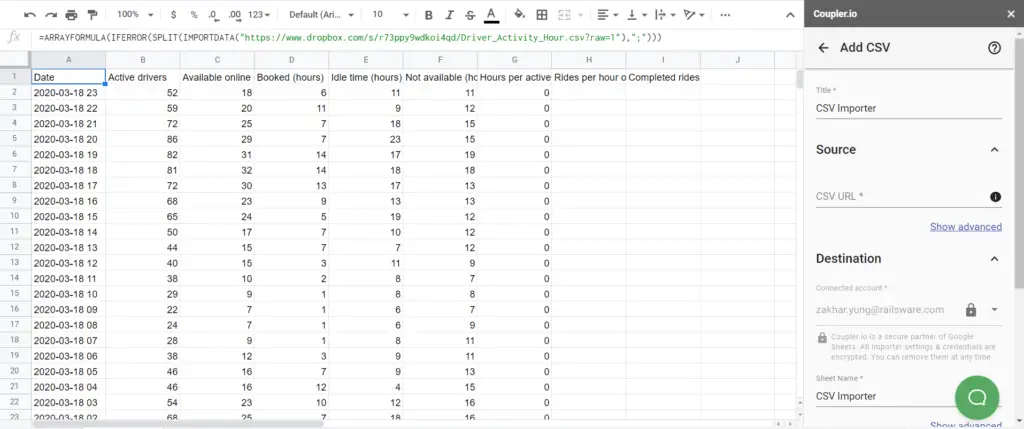

You will need a BigQuery table per type of CSV that you want to load. IE: if the Google Drive Folder URL is " " then the Drive Folder ID is " 1-zFa8svUcirpXFJ2cUn31a" and that's what you'll put into the columns in the Google Sheet. The ID is at the end of the URL when using Google Drive in a browser. You will then update the Google Sheet with the Google Drive ID in the appropriate columns. YOu can have multiple "processed" folders if you'd like, or you can have the same "processed" folder if you want everything to end up in the same place. You will need one google drive "source" folder for each different type of CSV. Then create some Google Drive Folders to house your CSV files. Google BigQuery Project(s), Dataset(s), Table(s) - įirst, Copy the Google Sheet into your own google account.So, I rewrote the process to be a lot more simple, using a single Google Sheet to act as the pipeline manager. I realized for people getting started that was probably overkill and required them to write too much code for themselves.

That process used definitions in code for the types of CSV files detected, the load schema for BigQuery and a few other things. Nice huh? BackgroundĪ while ago, I wrote up a process for loading CSV files into BigQuery using Google Apps Script. This process will even let you configure if you want the automation to truncate or append the data each time to the BigQuery table.

Want to load manage multiple pipelines of CSV files to different BigQuery projects/datasets/tables automatically, and control them all from a single Google Sheet? Yes? Then this article is for you. 6 min read Photo by Luke Chesser / Unsplash.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed